How Tilt updates Kubernetes in Seconds, not Minutes

When I bring my cat a box of toys, he loves the box and ignores the toys. I wish he’d pay attention to the work I did, but I didn’t let it bother me because he’s a cat. Then I started getting the same reaction from Kubernetes developers.

We built our new tool Tilt to make Kubernetes updates fast. Really fast. Seconds-instead-of-minutes fast. Cloud-as-fast-as-laptop fast. But when we show it to developers, they love the UI and ignore the speed.

I understand why: Tilt’s Heads-Up Display collects errors, from build breakages to stack traces, into one layout so problems are easy to see. Developers see it and grok that Tilt lets them stop playing 20 questions with kubectl. But developers stop using tools that get in their way, so even if you start using Tilt because of the UI, you’ll keep using it because your 2m docker build && kubectl apply now takes 5s.

I’ll bring you along on our journey of slashing 4 different Kubernetes update overheads. Even though Tilt doesn’t expose each as a configurable option, I want to share the excitement of finding and smashing a sequence of bottlenecks.

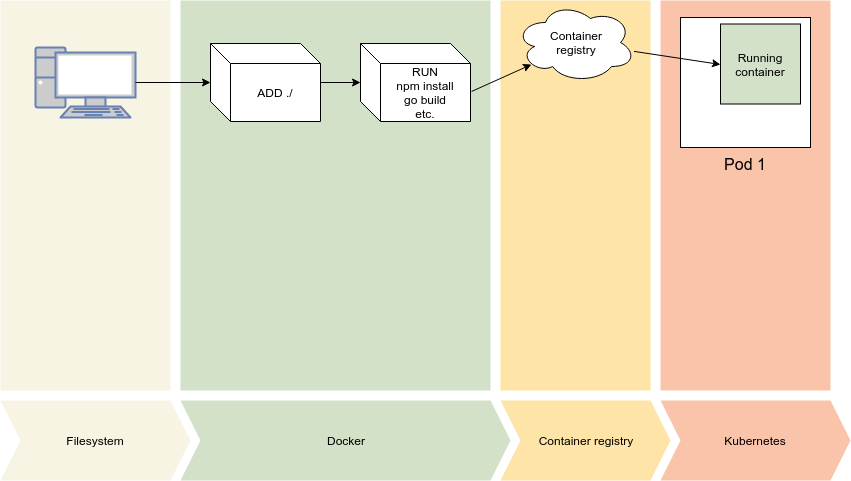

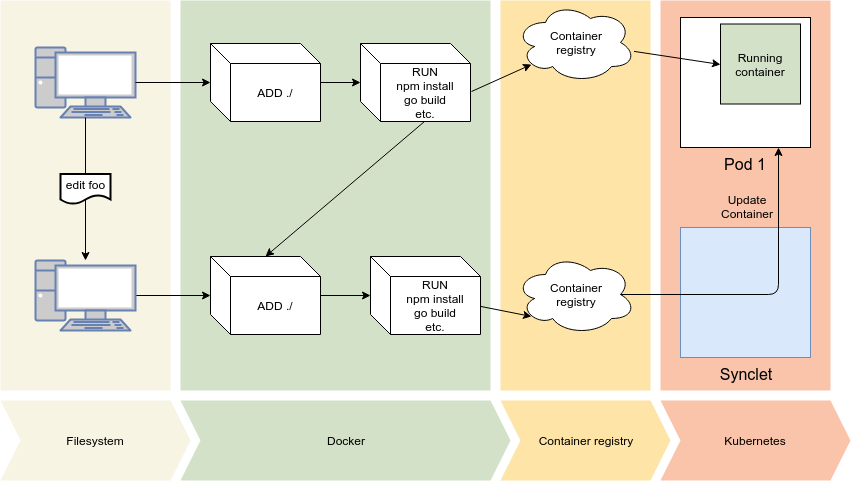

Vanilla Kubernetes Deploy

Before we can update, we have to deploy an initial version. The Kubernetes deploy pipeline:

-

send source code to Docker (as a build context)

-

run a build command in Docker to create a layer with the generated/compiled artifacts

-

push the resulting image to a registry

-

allocate a new pod, pull the image and start running

Vanilla k8s deploy

Vanilla k8s deploy

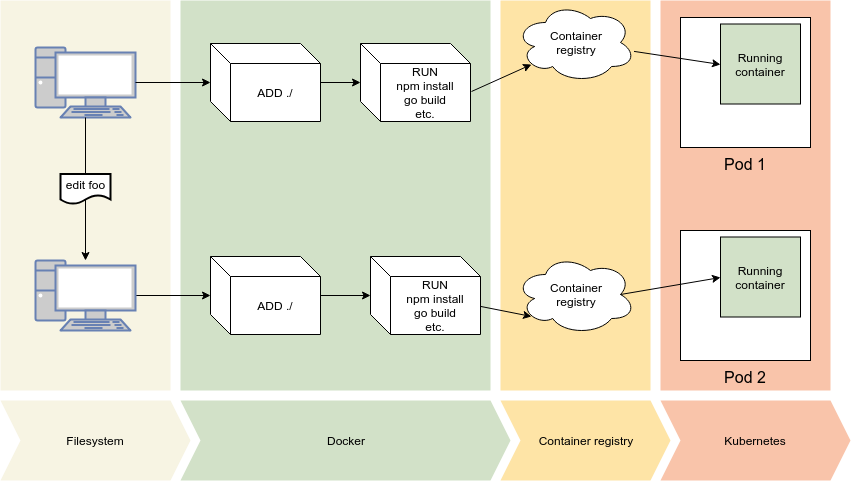

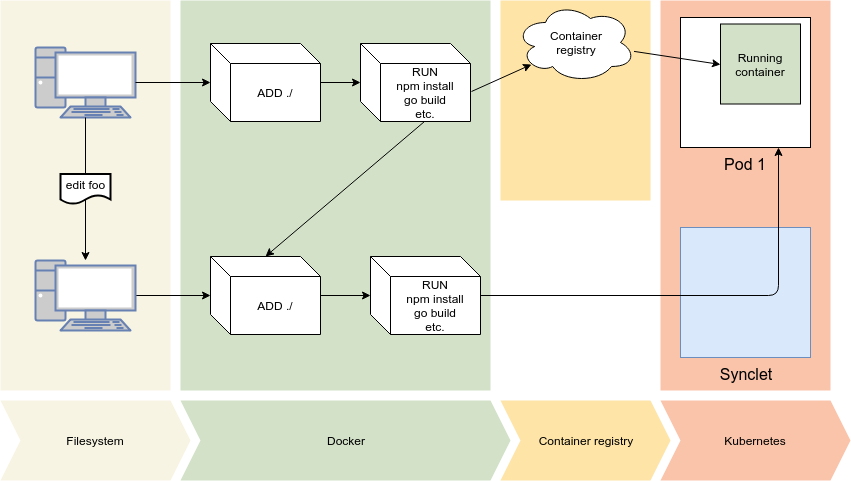

Second Time, Same as the First

Updating kubernetes isn’t an update so much as a second deploy. Even if you just changed one file, Docker will create a new layer with your current source code and start a build from scratch. (Docker’s layers and multistage builds can help, but require much cleverness)

Two vanilla k8s deploys

Two vanilla k8s deploys

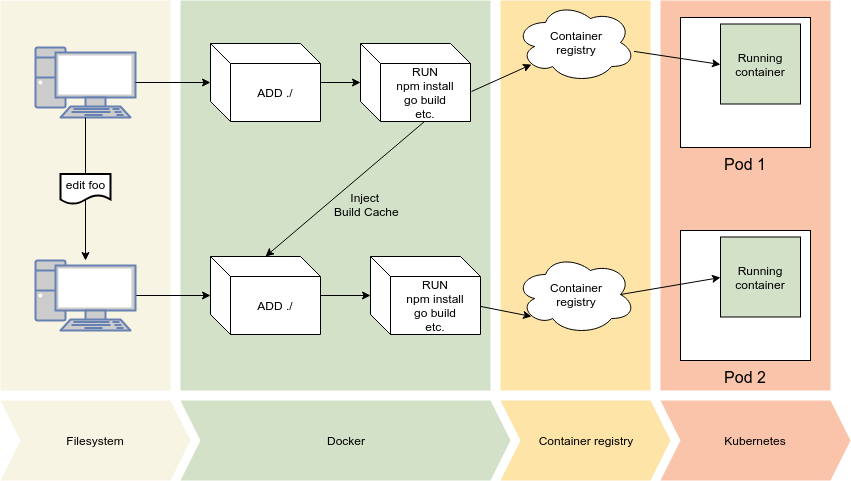

Incremental Build

Tilt’s image build API makes it easy to use your build cache on subsequent builds. Realistic builds improve from 30s to 1s.

Broadly speaking, Docker images are built in two steps: first, you copy over your source code; second, you run any steps to compile code or generate artifacts (e.g. “go build”, “proto gen”, “npm install”, etc.) Tilt’s fast_build improves both steps. In the copy step, Tilt updates just the edited file(s). In the build step Tilt injects the cache from the previous run.

The second time you make an apple pie, reuse the previously created universe

The second time you make an apple pie, reuse the previously created universe

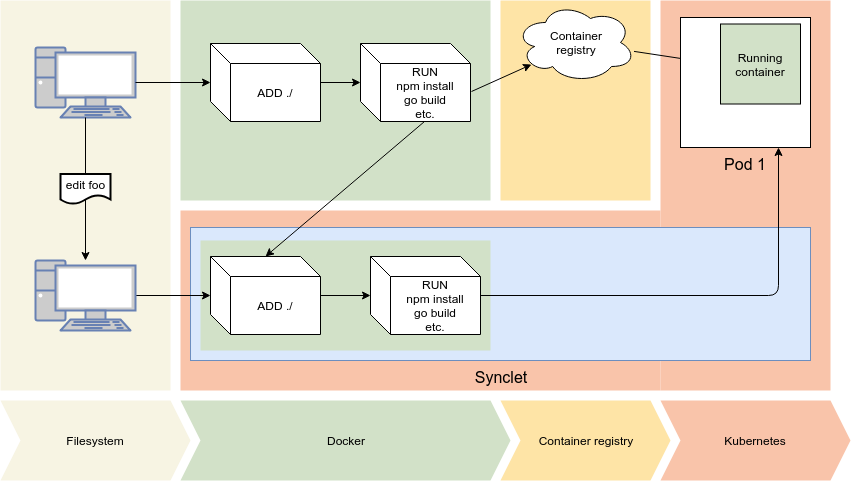

Incremental Deploy

We’re not done! Kubernetes is fast at starting new pods, but it can still take seconds. Tilt reuses existing Pods.

A “Synclet” runs on the same node. When you update files, the Synclet adds them to the existing pod and restarts the container. (This is an example of the Sidecar pattern).

Don’t tear down your house each time you want to change a doorknob

Don’t tear down your house each time you want to change a doorknob

Skip Registry

Container registries are amazing for images with high fan-out, but our images are used once. Tilt reduces overhead by sending updates directly to the Synclet.

(This optimization creates corner cases when pods die and restart. Tilt watches your cluster to handle these cases and keep your personal instance in sync.)

Mitigating the man-in-the-middle makes Mallory mad

Mitigating the man-in-the-middle makes Mallory mad

Build in Cloud

1KB of edits to a .go file creates a 10MB binary diff; or 1 extra line in package.json can imply dozens of added libraries. Tilt sends the smaller source edit and does the build on the same node with our personal instance.

Tilt manages the complexity and headaches of running build commands in your cluster so you get faster updates.

The Code is coming from inside the Cluster

The Code is coming from inside the Cluster

Stand on our shoulders

Sound good? Want this now?

-

Read the Docs to get Tilt working with your project.

-

Star our GitHub. Or file an issue. Or fork and submit a pull request.

-

Join #tilt in the Kubernetes Slack to discuss/request.

Once you’re working with vanilla deploys, upgrade your builds to fast_build, as described in our docs, to get this goodness.

Cat Photo

Purring contentedly in his cardboard castle

Purring contentedly in his cardboard castle

Originally posted on the Windmill Engineering blog on Medium